GoodAI started as a research and development initiative inside Keen Software House in January 2014, when CEO Marek Rosa invested $10M USD into the project.

At Good AI we are focused on 3 areas

- LLM/LTM

- Applied AI & Robotics

- AI Game development

AI People

At GoodAI, we’re interested in applying AI technology to the video game domain. How can large language models be used to plan the actions of game characters? To improve their conversational skills and expand their personalities? To make interaction with characters engaging and immersive?

AI People is a fun sandbox game where you create and play your own scenarios. The game features AI-powered NPCs that learn and interact based on your decisions.

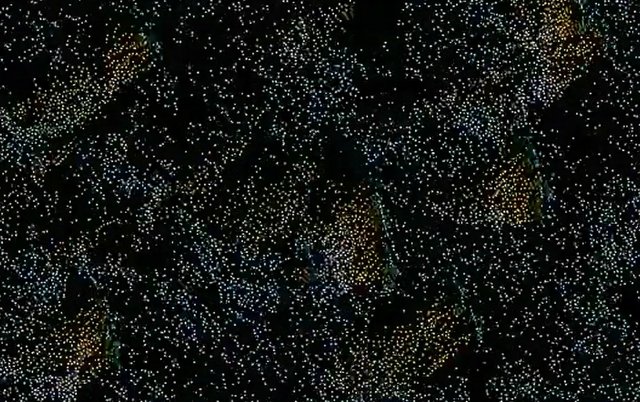

GoodAI Groundstation

We believe that safety should be a human right. GoodAI Groundstation empowers individuals to control drones effortlessly, democratizing safety and making it accessible to everyone.

Our primary areas of focus are crime prevention, search and rescue, wildlife protection and monitoring, among others.

LLM/LTM - Charlie Mnemonic

We are developing Long-Term Memory (LTM) systems to expand LLM agents' context window, enabling continual learning from interactions and environmental dynamics. Our goal is to create agents capable of lifelong learning, using every new experience to enhance future performance.

Our long-term goal is to build general artificial intelligence that will automate cognitive processes in science, technology, business, and other fields. We conduct our own research, advocate fundamental AI research at the EU governmental level, and forge a community of like-minded groups through the GoodAI Grants program.

GoodAI is a company with around 30 talented people working all across the world.